How to polarize & radicalize a person

How the current design of social media platforms are incentivized to polarize people

Have you ever wondered why people seem way more radical and polarized compared to just a few years ago? As if nobody could hold a neutral position on a topic?

Well, what if this weren’t an accident, but purposefully designed?

And what if the reason for this purposeful design is just boring business and marketing seeking optimization?

Today’s email is a repurposing of an article written for my blog in early 2019: a thought experiment to explain how we, as individuals, are being polarized into taking extreme positions on all topics.

Let’s start by assuming that, for any given topic, the array of opinions fall into a spectrum. It’s a bit of a false dichotomy, but it helps in visualizing what I want to convey and it mostly works.

Generally, most people hold a position in the middle of the spectrum. Or in the middle but slightly geared towards one of the sides. Or at least this has been the way for a long time.

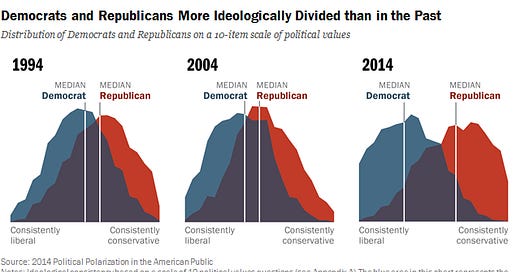

In the present, the way social media platforms are designed and the way marketing incentivizes hyper-targeting has lead to polarization. Meaning in our spectrum visualization that, whereas before most people fell in close to the middle and only a small few in each extreme, now most people fall closer to the extremes.

I don’t like using the example of American politics, as I have zero interest in them and I am not American, but the below image represents perfectly what I want to convey. Apply the same process in the image to every topic, not just politics.

It bears repeating that the point isn’t to explain the polarization in politics. It’s just a subset of the grander problem in hand, which is the polarization of every topic out there.

For this thought experiment, lets use an easy example to understand, like Climate Change. Yes, I know, it is a political topic too. Who cares, it’s easy to illustrate and the point is to convey how polarization happens.

Climate Change Polarization

For this thought experiment, having an opinion on Climate Change is falling in between a spectrum where on one extreme you have Climate Change advocates, and on the opposite you have Climate Change deniers.

Easy to visualize? Great. Let’s start from a neutral position.

A neutral position in this thought experiment could mean agreeing and disagreeing with certain ideas from advocates and agreeing and disagreeing with certain ideas from deniers.

The ideas that this neutral person agrees with the advocates is that we should be responsible in a way to how we affect our planet. That we should look for green alternatives when possible. That we should recycle.

And the ideas that this neutral person agrees with the deniers is that many policies “done for Climate Change” aren’t such and they’re being used as an excuse for higher taxation, and that there are developing religious elements to Climate Change.

Not holding a strong opinion on any position, but interested in learning more.

This neutral person now goes onto YouTube, or Twitter, or blogs, etc. to find out more information on the topic of Climate Change. Depending on what he or she stumbles upon first, there is a very big chance that it will determine which rabbit hole will follow.

And thus, the process of polarization has commenced.

Let’s assume that this neutral person has searched on YouTube for videos explaining Climate Change denialism. He or she already knew something about the main ideas behind it, but wants to be more informed.

This person watches a 10 minute video titled “Why Climate Change Is A Hoax” and calls it a day.

The next day, when logging onto YouTube to follow his or her favorite subscriptions, the feed has a recommendation or two of videos following a similar position in regards to Climate Change. Something like “Why Climate Change is being used to tax the poor”.

What has happened here is that the person is now being sorted and classified as a person interested in Climate Change denialism. This person will now be shown the same content other people put in the same box like to watch.

Now, whenever this person searches for something related to Climate Change advocacy, it will probably be a video from a person holding the opposite position “debunking” the original video.

Without knowing it, this person is now in a bubble where all content related to Climate Change is clearly friendly with only one stance.

Had this person searched for videos related to Climate Change advocacy, this person would be in the same circumstances but in the opposite position.

What was once a person with a neutral position on Climate Change, is now a person who is only exposed to one position. It will be very difficult for this person to truly remain neutral anymore.

General Polarization

Iterate with time and to all topics, and all you and I will ever see regarding any topic searched is one position clearly being promoted, whereas any other positions are only mentioned antagonistically as a way to reinforce the original one.

“Hah, that wouldn’t happen to me, it is clearly obvious that people from the other position are dumb and stupid. The smart people like me hold X position.”

Don’t be so sure.

Everyone thinks others are the ones who are radicalized and polarized. Everyone thinks others are brainwashed. Everyone thinks they hold the truth.

Well, those “others” think exactly the same about you. That you are the radicalized, polarized, brainwashed and dumb idiot who “cannot see the truth”.

It is extremely hard currently to hold a neutral position on a topic, because it takes a proactive effort to fight against the design of social media platforms and how marketing is incentivized.

There is no conspiracy here. Reality is usually way more boring.

Recommendation Algorithms & Hyper-Targeting

The reason platforms are designed in such a way is because their business model is to make money from ads. The longer you spend on their platform, the more ads they can show you, the more businesses are willing to pay for ads.

The main question social media platforms ask themselves every day is: “how can I get people to spend more time on my platform and not on others?” Well, recommendation algorithms.

If I search for football videos on YouTube, there is a big chance that if YouTube recommends more football videos I will continue watching.

Whatever you search for and watch, read, listen, etc. you will be recommended something very similar with the goal to keep you there as long as possible.

There is a reason that social media addiction is on the rise. Addiction experts are hired to make their platform addicting, after all…

Social media platforms figured out ages ago that if they recommend us similar content to what we search, we will stay there for some time. Which inevitably leads to filter bubbles, echo chambers and polarization.

Which are some of the main reasons behind the polarization of every topic.

Now, what about ads?

Well, if I’m a social media platform and I make most of my revenue on ads, I want to keep businesses investing in my platform and paying for ads.

What is more successful: an ad on television, sent to millions who are not the ideal customer, or sending the same ad to less people, all whom perfectly fit the ideal customer?

If I am a social media platform, creating the best profiles on my users will make me the most revenue, as anyone who pays for ads seeks a profitable return. A highly specific profile of individuals grouped with others who have similar interests makes for valuable data.

There is currently no incentive to promote nuance or non-partisan stances on topics from the social media platforms side. It just makes less money and nobody is in business to make less money.

All talk on “fixing things” that doesn’t involve a change in their business models is all fluff and no substance.

Found this email useful? Share it with those you disagree with and maybe we can make a small change for the better. Also, don’t forget to subscribe!